Exploring the Capabilities of the Gemma 3n Model

Introduction In today’s rapidly evolving technological landscape, the ability to carry out AI processing directly on devices like smartphones and wearables is increasingly valuable.

Introduction

In today’s rapidly evolving technological landscape, the ability to carry out AI processing directly on devices like smartphones and wearables is increasingly valuable. Enter Gemma 3n, the latest iteration in Google DeepMind's lightweight AI model series. Specifically designed to optimize on-device computing, Gemma 3n is redefining the landscape of edge AI by offering multimodal support and efficient processing capabilities. This blog post delves into the distinctive features and applications of Gemma 3n, highlighting why developers and businesses should take notice.

Key Features of Gemma 3n

Gemma 3n boasts a range of innovative features tailored to enhance its functionality across a variety of devices and applications:

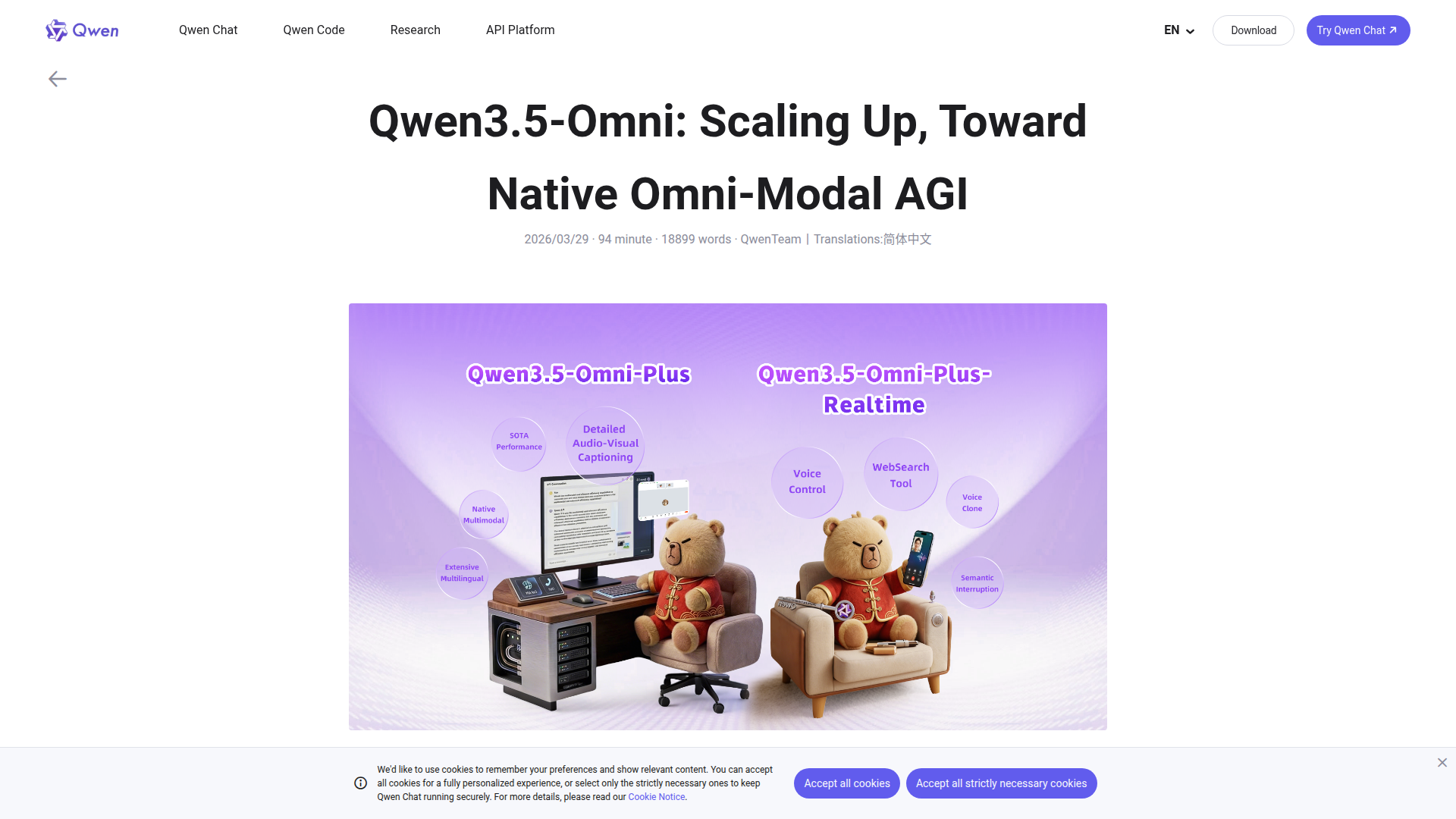

- Multimodal Support: This model handles text, image, audio, and video inputs, making it a versatile solution for complex inference tasks requiring the integration of various data types.

- MatFormer Architecture: By selectively activating model parameters per request, it optimizes compute and memory usage. This means developers can extract smaller sub-models or dynamically adjust model size as needed.

- Per-Layer Embedding (PLE) Caching: Caching parameters to fast local storage drastically reduces memory usage, boosting overall performance.

- Vision and Audio Processing: Equipped with high-performance vision encoders like MobileNet-V5, Gemma 3n is adept at recognizing and processing both visual and audio data efficiently.

- Wide Language Support: With training in over 140 languages, Gemma 3n can effectively handle linguistic tasks on a global scale.

- Input Context: Capable of processing up to 32,000 tokens, enabling it to effectively manage extensive data sequences and intricate processing tasks.

Model Specifications

Gemma 3n comes in two sizes to accommodate varying needs:

| Model Name | Raw Parameters | Input Context Length | Output Context Length | Size on Disk |

|---|---|---|---|---|

| E2B | 5 billion | 32K | 32K minus request | 1.55 GB |

| E4B | 8 billion | 32K | 32K minus request | 2.82 GB |

Availability and Integration

For those looking to implement Gemma 3n, the model is available on platforms such as Kaggle and Hugging Face, enabling seamless integration into existing projects. Furthermore, NVIDIA's support for Gemma 3n on Jetson and RTX platforms broadens deployment capabilities across different hardware configurations.

Conclusion

Gemma 3n signals a new era in edge AI with its robust set of features geared towards efficient on-device computing. Its multimodal capabilities, efficient architecture, and broad language support make it a powerful tool for a wide array of applications in industries ranging from tech to healthcare. Developers interested in leveraging the potential of Gemma 3n in their projects are encouraged to reach out to Automated Intelligence for tailored assistance in integrating and optimizing their use of this innovative AI model.

]]>